When I saw this tweet, on X, I thought it was another LinkedIn exist message. (but this exists message has a deep story behind it)

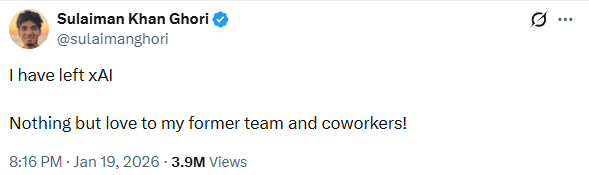

"I have left xAI. Nothing but love to my former team and coworkers!"

— ex- xAI Sulaiman Khan Ghori

It reads like standard Silicon Valley boilerplate. It's the kind of polite, sanitized exit message you see on LinkedIn every day — the digital equivalent of a firm handshake and a forced smile. But the more I looked at the timestamp, the more unsettled I became. Because this wasn't a transition.

Don't have a Medium Premium? Just click here (friends link) and read it for free.

It was an ejection.

On January 15, Sulaiman Khan Ghori, a key engineer at Elon Musk's secretive xAI startup, sat down for a podcast. He was relaxed. He was articulate. He was clearly brilliant.

Four days later, he was out of a job.

To most of us, a podcast is just content. It's background noise while we fold laundry or sit in traffic. But if you've ever worked in high-stakes R&D, you know the unspoken rule: silence is currency. You can code until sunrise, you can sleep under your desk, you can bleed for the product — but the moment you start talking about the roadmap, the clock starts ticking.

An engineer at the world's most secretive AI lab should know better than to treat a microphone like a water cooler.

At first, the interview on the Relentless podcast sounded like a victory lap. It painted xAI as a place of raw, unbridled engineering energy. Ghori spoke passionately about the "flat structure," the aggressive deadlines, and the sheer scale of the "Colossus" supercluster. It felt like good PR.

But he didn't stop there.

Like a thread being pulled from a sweater, the conversation drifted from culture into specifics. And once you start pulling, it's hard to stop. The details didn't just hint at the future; they gave away the blueprint.

The Project Was "Macrohard"

Here's the part that sounds like sci-fi, but isn't.

Ghori detailed a project internally codenamed "Macrohard." The name sounds like a developer's inside joke, but the ambition behind it is deadly serious. The goal wasn't just another chatbot to summarize your emails. It was "human emulators."

He wasn't describing an LLM that writes poetry in the style of Shakespeare. He was describing an AI agent designed to act as a universal employee. It views the screen. It moves the mouse. It types on the keyboard.

It doesn't need an API. It doesn't need backend integration. It just needs a login. It is a digital ghost in the machine, capable of doing your job exactly the way you do it — only faster, and without sleeping.

But the real kicker wasn't the what. It was the how.

When you're training models that large, you need power. Ungodly amounts of it. And this is where Ghori casually dropped the bombshell that likely sealed his fate. He didn't just propose a solution to the computer shortage; he revealed a plan to harvest it from the real world.

Idle Tesla vehicles.

He described a future where the distributed computing of millions of parked cars is tapped to power these AI agents. A distributed supercomputer hiding in plain sight.

Think about the implications. 100 gigawatts of inference power, sitting in driveways from Palo Alto to Shanghai, waiting to be switched on while their owners sleep.

That extra detail mattered.

That's where the line was crossed.

You can talk about the grind. You can talk about the mission. But you cannot confirm a massive, privacy-impacting strategy involving millions of consumer vehicles on a YouTube channel with 5,000 subscribers. This wasn't just a technical leak; it was a regulatory grenade tossed into a crowded room.

Once You Listen, The Cracks Are Everywhere

What really keeps me up about this story is how human the mistake was.

Ghori didn't look like a whistleblower. He didn't look like a corporate spy selling secrets in a parking garage. He looked like a builder high on the fumes of innovation. He talked about Musk's management style with a kind of reverence — describing how Elon would bet an entire Cybertruck as a prize for a 24-hour coding sprint. He talked about the "live by the sword" culture where engineers are thrown into the fire and expected not just to survive, but to forge something new.

He was proud of the scars.

But in that rush of dopamine, he forgot who signs the checks. Elon Musk is famous for extreme compartmentalization. At xAI, secrecy isn't just an HR policy; it is the product's immune system. When you reveal that the company plans to turn the global fleet of Teslas into a distributed supercomputer, you aren't just sharing cool tech specs.

You are lighting a signal flare.

You are pre-empting a PR storm that the comms team isn't ready to fight. You are tipping off Google and OpenAI. You are inviting regulators to pull up a chair to the table before the table has even been set.

One critic on X called it "pure rookie behavior." A "cardinal sin." And they're right.

If this were just one slip of the tongue, maybe he would survive it. We've all said too much at a party. But the density of information here was staggering. He laid out the roadmap for "human emulators." He detailed the infrastructure bottlenecks. He effectively open-sourced the company's strategic advantage in a 70-minute audio file.

He gave away the "why," the "how," and the "when" — and for a company like xAI, that is everything.

This Was Never Just About A Podcast

If this were a standard Series B SaaS company, maybe this ends with a slap on the wrist. A stern conversation with Legal. A mandatory "training module" on confidentiality that you click through while half-asleep.

But xAI isn't building a CRM.

The velocity of his departure — gone less than 96 hours after the upload finished processing — sends a signal that cuts through the noise louder than any all-hands meeting ever could. It is a brutal reminder that in the current era of the frontier labs, information isn't just data. It's munitions.

We have been conditioned by the "build in public" movement. We scroll through Twitter seeing founders screenshot their MRR and engineers live-streaming their commits. We have deluded ourselves into thinking transparency is the default setting for modern tech.

We forgot the stakes.

In the race for AGI (Artificial General Intelligence), the winning strategy isn't open source. It's the Manhattan Project. When you are potentially building the most powerful technology in human history, opacity isn't a bug. It's the primary feature.

Why This Actually Matters

Sulaiman Khan Ghori was clearly a high performer. You don't get access to the inner sanctum of xAI by being average. He was building the future. He was sleeping at the office. He was the definition of "all in."

But in 2026, loyalty isn't enough. You need discipline.

We trust our engineers with codebases worth billions. We trust them with the private data of millions. But we often forget to check if we can trust them with a microphone.

xAI caught this one. The podcast is still up, but the engineer is gone.

For all our advancements in AGI, for all the talk of superclusters and human emulators, there is still one bug you cannot patch: the human urge to tell a good story.

And it leaves you with a lingering, unsettling thought — that the price of the most interesting job in the world was just 70 minutes of conversation.

Read More. (Substack link) Support our work. (Buy me a Coffee link) Looking for a work from home jobs in 2026 (try our app for free).